GlassEar

Project Description

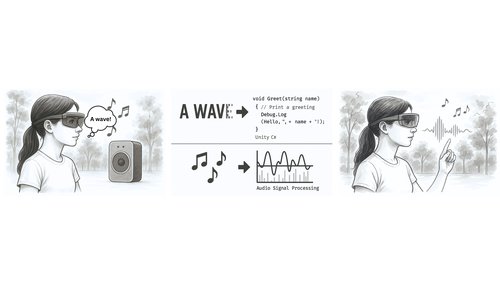

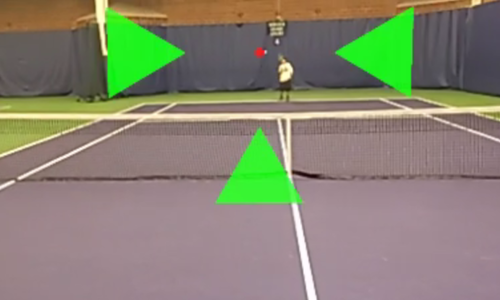

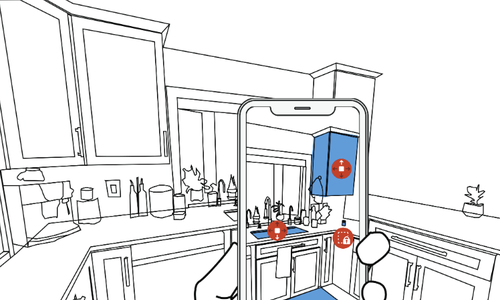

Persons with hearing loss use visual signals such as gestures and lip movement to interpret speech. While hearing aids and cochlear implants can improve sound recognition, they generally do not help the wearer localize sound necessary to leverage these visual cues. In this paper, we design and evaluate visualizations for spatially locating sound on a head-mounted display (HMD). To investigate this design space, we developed eight high-level visual sound feedback dimensions. For each dimension, we created 3-12 example visualizations and evaluated these as a design probe with 24 deaf and hard of hearing participants (Study 1). We then implemented a real-time proof-of-concept HMD prototype and solicited feedback from 4 new participants (Study 2). Study 1 findings reaffirm past work on challenges faced by persons with hearing loss in group conversations, provide support for the general idea of sound awareness visualizations on HMDs, and reveal preferences for specific design options. Although preliminary, Study 2 further contextualizes the design probe and uncovers directions for future work.

This project is part of a larger research agenda exploring sound awareness support for people who are deaf or hard of hearing.

Publications

Talks

Head-Mounted Display Visualizations to Support Sound Awareness for Deaf and Hard of Hearing Users

Apr 20, 2015 | CHI 2015

Seoul, Korea